We are pleased to inform you about the collaboration between Garry Kasparov and Shibuya Productions. It is the first time that a foreign Manga is featured. In the Manga world, it is similar to a scientist being featured in

Nature.

BLITZ THE MANGA

Tom, a young high school student, has a crush on the beautiful Harmony. Once he gets to know that she is passionate about chess, he decides to enroll in the college club. But he doesn’t know the rules! He has no choice: he must learn everything and practice seriously.

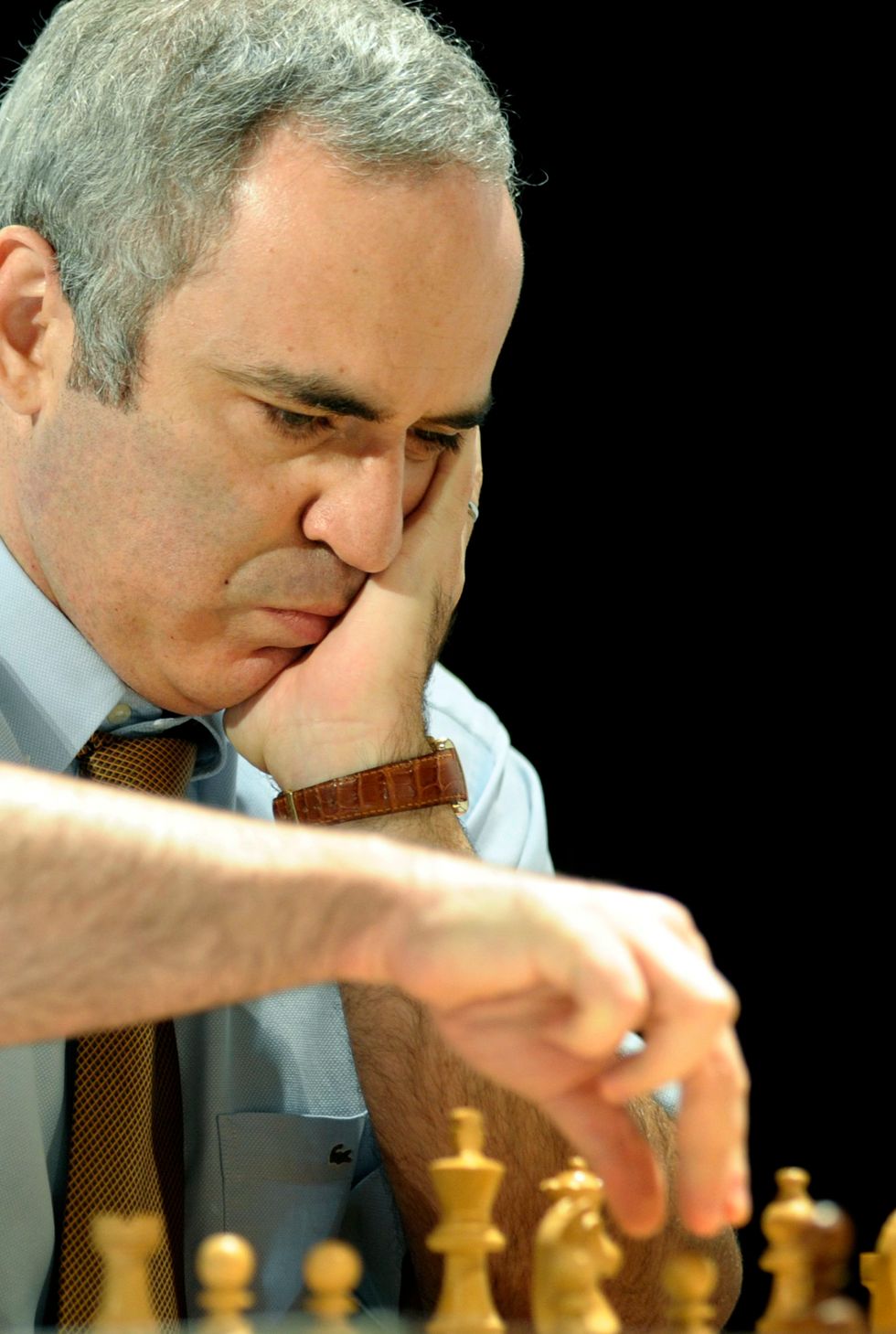

Rapidly, he discovers the existence of Garry Kasparov, the greatest player in the History of chess.

During his research, Tom bumps into a virtual reality machine that will allow him to analyze the most legendary games of the master!

Then an unexpected event will open the doors to Tom of the very high level of chess, and this, in spite of him…

BLITZ THE GAME :

Are you familiar with the Blitz? This ultra-fast variation of a chess game? To celebrate the release of the manga, Shibuya Productions invites you to bring the Blitz app in your pocket!

Compete with other opponents or the A.I. in games where time and strategy will decide your fate. Who will become the absolute Blitz master?

* Blitz is created by Cedric Biscay in the French language then there is

translation and many exchanges in the Japanese language with the Japanese

artist Daitaro Nishihara, that’s why the manga is simultaneously

available in French and Japanese languages.

* Blitz is mainly created between Monaco and Japan which is totally

unique in the history of Manga.

Download the game for free from Google Play HERE !

Download the game for free from Apple Store HERE !